Security Testing

Tomaso Vasella

When the Oxford English Dictionary added the verb to google to its list of words in June 2006, it became clear that the company from Mountain View has become an integral part of our digital society. Since then, it’s been eight years and Google has become more than just a search engine. It’s an increasingly popular address for scheduling, e-mail as well as an alternative to Microsoft’s Office suite.

![The semi-secret Google Research Department Google[x] develops all big projects at Google The semi-secret Google Research Department Google[x] develops all big projects at Google](labs/images/google_x.png)

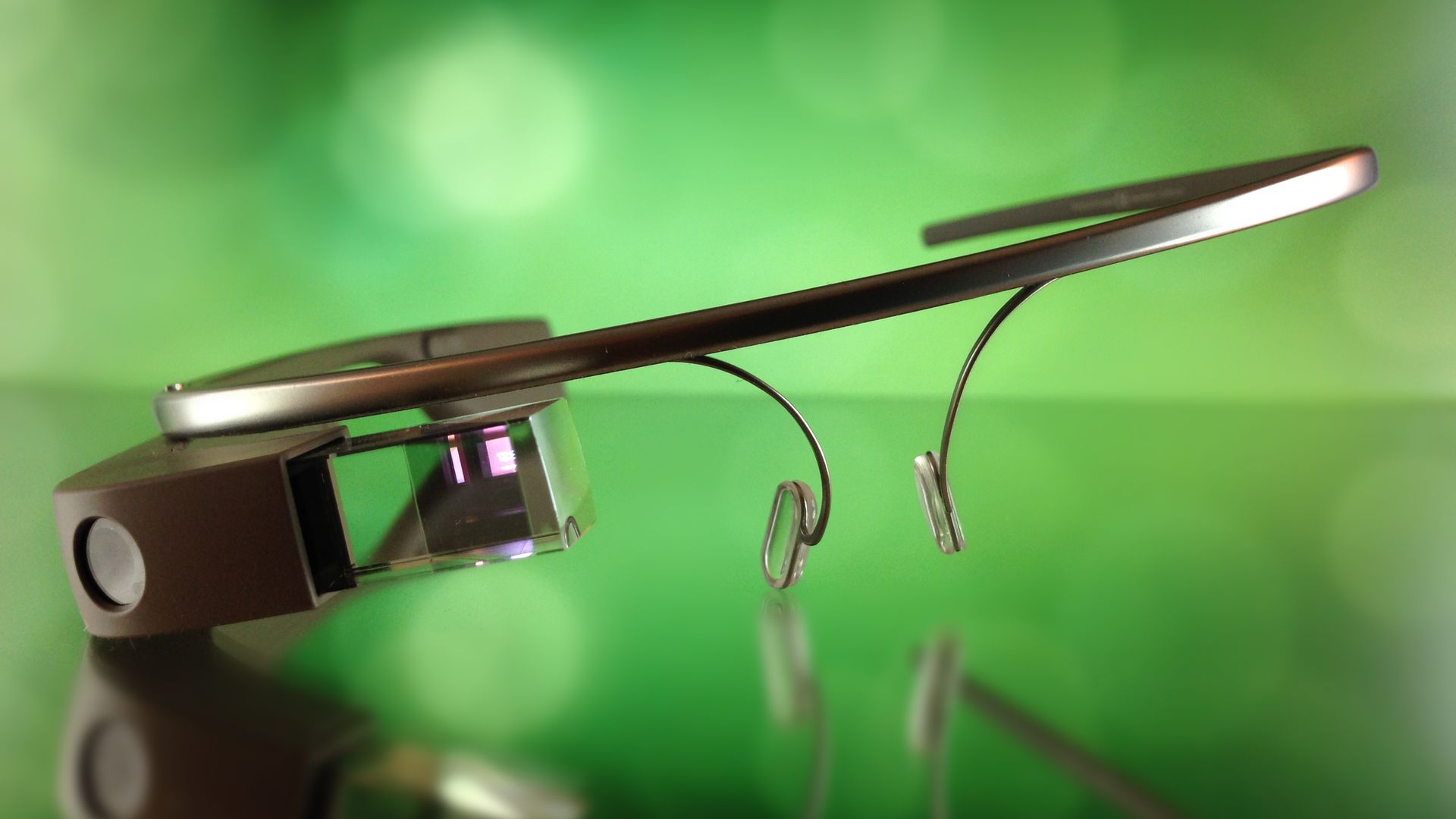

What isn’t quite as well-known is Google[x], the semi-secret division of Google, that has its headquarters about half a mile from the Googleplex at 1600 Amphitheatre Parkway. Google[x], under the supervision of one of Google’s founders, Sergey Brin, has one mission: Achieving larger technological advancement. The projects Google[x] works on are manifold. Among them are the autonomous car and the mesh-network of balloons known as Project Loon as well as Google Glass.

Google Glass is what’s called an Optical Head-Mounted Display (OHMD) and a prime example for ubiquitous computing, a concept that has a computer that is always present and always on. In brief: it’s a prism-like device that is mounted to a frame like the ones that we know from glasses. It allows for the wearer to see data visually and manipulate said data using speech input and touch.

While testing Google Glass, a certain sense of consternation sets in rather quickly. If you expected a high resolution heads-up-display that fills your entire field of vision, you’ll be disappointed. What you see is a display in the upper right corner of your vision. In fact, Google Glass is – as of this point in time – little but a second screen, an extension of a device that does the data processing for Google Glass. And it works really fairly well, but people who need glasses should either keep their contact lenses nearby or invest into the additional corrected glasses for the device. The relatively weak prescription of the author of this article was enough to make Glass useless without using contacts.

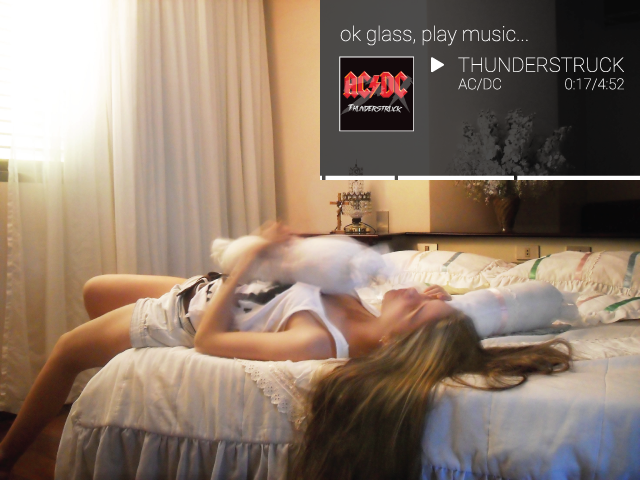

Back to the concept of the second screen: What differentiates Google Glass from other omnipresent mobile devices is the theoretical plausibility of constant use without any interruptions. While a mobile phone frequently disappears into pockets or bags, Google Glass stays in the field of vision of the wearer. The user sees notifications right away, without any kind of delay. In addition to that, users are free to actively use the features the device offers. Among them is the possibility of taking a picture by simply batting an eyelid.

The possibilities that this constant availability within the field of vision offers are interesting: There’s no need to look anywhere but the street in order to find the next turn. Using applications like Word Lens allow for the direct translation of Japanese Kanji or other signs in foreign languages without even knowing the mechanics behind the writing system, even when both hands are in use by having to carry a lot of luggage. The potential of OHMDs is huge and while Google Glass offers interesting starting points, future stages of evolution will make better and more use of the technology, similar to what we were able to observe in the smart phone market. Currently, Google Glass is a nice gimmick, but its effective use is very limited.

Of course, all the possibilities in this article can be abused. The constant availability of Google Glass offers many a risk. Of course, you could laconically argue that the use of Google Glass ends the trend where most people only see live concerts on the display of their smart phone, but that’s not the issue.

Compomising the device would effectively lead to the scenario where the attacker sees everything through the eyes of his victim, even in a private setting. Similarly, Google Glass has access to the user’s entire Google Account and it’s usually not protected by screen locks or PIN codes the way smart phones are.

There are documented attacks on Google Glass. Rather efficient is the so-called Wifi Hijacking which can be executed using QR codes among other things. Google Glass is programmed to read QR codes that lead to a WLAN address using the camera and connect automatically. This is done because it greatly improves the ease of use, because the entry of passwords and similar data entry is rather complicated using Glass. In an attack scenario this means that an attacker can set up a malicious access point, place a malicious QR code in the field of vision of the user and – after the user has connected to the malicious network – the attacker can execute a classic man-in-the-middle attack.

This vulnerability can’t be exploited using only QR Codes, but also with classic Wifi Hijacking, where an attacker simply copies the SSID of a known access point, which Glass interprets as the real thing and connects to the seemingly trusted access point. The consequences are the same as the ones described above.

Both attack vectors have been successfully exploited with surprising efficiency during the scip AG test series. Especially when using the blink photography feature, the QR code is easily inserted into the picture and is being interpreted by Glass surprisingly often.

Google Glass is not just a technology under attack. Google Glass can be used as a tool for attacks. The data privacy issue is obvious when people in public spaces can take pictures and record video of third parties without their knowledge. The difference between using a conventional camera or a smartphone where the device has to be aimed at the person who is to be recorded and using Google Glass is as significant as it is obvious.

Researchers have proven that the video feature of Google Glass can be used highly effectively to spy on PIN codes and other identifying factors such as passwords. And that doesn’t work only by looking over the victim’s shoulder, but also by using other means such as shadows. It’s not too astounding that companies such as casinos are prohibiting the use of Google Glass in their establishments.

Google Glass is a prominent device of a completely new family of computers, which will keep us busy in the years to come. Not just as a security industry, but as a society. Since scip has received its prototype about six months ago directly from Google there’s been an incredible lot of development and progress and we can safely assume that there will be another great leap forward with the release of the new SDK, even before the limits of the current hardware are reached. Thus, this article is just what it says in the title: A momentary snapshot. And it won’t be the last of its kind.

Our experts will get in contact with you!

Tomaso Vasella

Eric Maurer

Marius Elmiger

Eric Maurer

Our experts will get in contact with you!