Transition to OpenSearch

Rocco Gagliardi

The log is born to inform humans about the status of a specific system, just in case of a problem, as record of what happened. Some times – a good example: xen_netfront: xennet: skb rides the rocket: 21 slots – the message sounds like a joke*: is the human-human communication.

In the wonderful world of SIEM (Security Information and Event Management), the plethora of messages generated by different (part of) systems was interpreted and correlated: processed or – better – pre-processed by machine for the end-human-user.

In the era of the IoT (Internet of Things), we will assist in a basic paradigm change: the message generated by a machine will be used by another machine, not by a human.

I’m not sure what John Cage had in mind with the statement The Network is the computer, but I don’t think he meant IoT. The IoT refer to (a huge number of) interconnected devices that runs with very little human intervention. We install them in our houses, we wear them during our training, part of them are with us during trips and part remains at home, informing us about the location of our goldfish or the status of our fridge.

In the industry, the IoT is used to monitor the status of very complex installations, using thousands of sensors with millions of samples and help saving lot of money. Data generated by these devices (sensors, actuators, etc.) can be used to predict the system behavior and to improve future versions.

How changes the traditional log compared with the IoT?

| Key | Classic Log | IoT Log |

|---|---|---|

| Avg number of logs/[s] | low | high |

| Avg length of log [bytes] | high | low |

| Validity timerange | minutes – days | micro seconds – seconds |

| Log content | State transfer | Telemetry |

| Log main scope | History | Precog |

| Communication streams | Machine ⇒ Human | Machine ⇒ Machine |

As use case, take a power plant monitoring. Some of the advantages to constantly monitor the operating parameters of a complex machine such, for example, a gas turbine:

Possible consequences:

Extend the idea to other parts of the system, and you will quickly have lot of sensors to monitor:

| Parameter | Value |

|---|---|

| Sensors | Analog: 30.000, Digital: 20.000 |

| Sampling rate | Analog: 50ms, Digital: 1s |

| Data type | Analog: float, Digital: short-int |

| History | 1 – 3 years |

The necessary infrastructure to handle such a data-flow coming from so many different sources, diverges from the classical architecture, and must assure:

Alongside the classic logging technologies, new specific solutions for the telemetry are being consolidated. No particular standard is adopted, since the data are just k→v tuples. Regarding the communication protocol, almost everyone is based on IP. On top of IP, the choice is mainly between MQTT, XMPP and CoAP.

In the storage area, for telemetry, the choice goes to dedicated databases (Graphite/InfluxDB/Hadoop) and specific software to display charts or build dashboards. To mix new features in old solutions, some parser/extractors may be used to extract performance data and push it to telemetry databases while maintaining the old processes in place.

The key component is the IP protocol, especially the “new” version 6. The battery for many sensors, especially those designed for the IEEE802.15.4, must last for months; so, the device is not online all the time, cannot communicate at high speed or – sometimes – is just out of range. This kind on networks are known as LLNs (Low-power and Lossy Networks).

Following, a very short list of key points for each protocol.

| Protocol | Pro | Contra |

|---|---|---|

| MQTT | Pushed by IBM. Subscribed services (Many2Many). Two way communication over unreliable nets. NAT is not critical. QoS in place (Fire-and-forget, At-least-once and Exactly-once). | Low power, but not for extremely constrained devices. Normally “online” all the time (addressed in MQTT-SN). Long topic names, impractical for 802.15.4 (addressed in MQTT-SN). |

| CoAP | Pushed by CISCO. Primarly a One2One protocol. Resource discovery. Interoperate with HTTP/REST. | Sensor is typically a server, so NAT must be designed carefully. Since UDP, no SSL/TLS. DTLS can be used. |

| XMPP | Pushed by CISCO. Real-time. Massive scalability. Security. | Not been practical over LLNs. Need for an XML parser. |

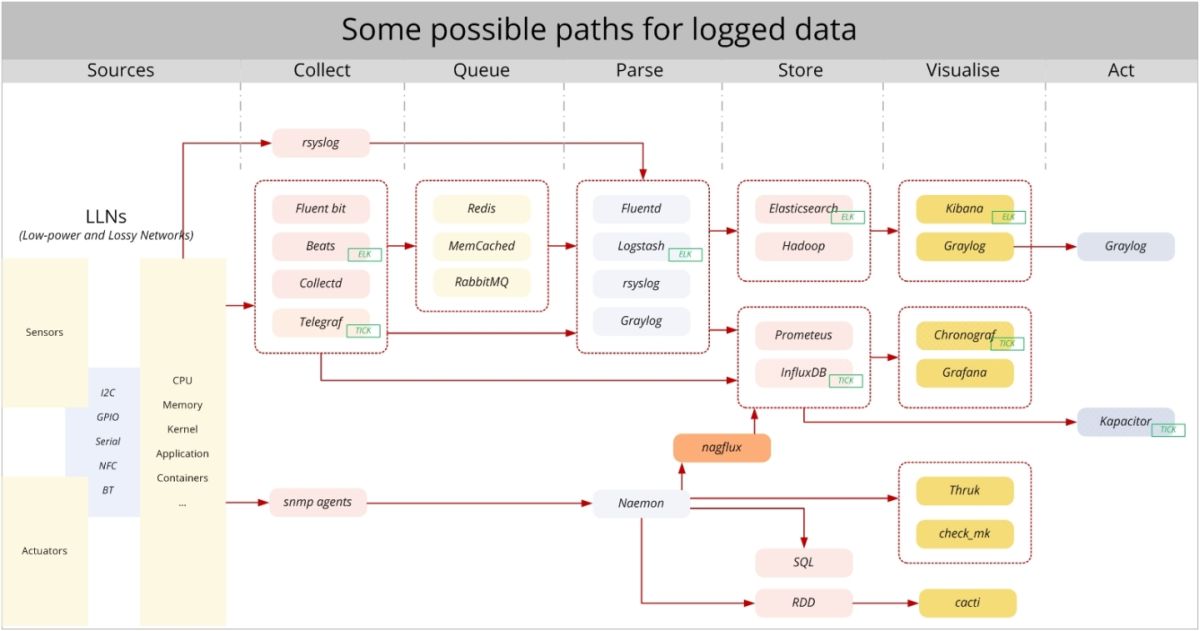

Following, a summary of products with references; refer to the schema for the interconnections between components. Please remember that the same process can be implemented in very different manners: just use the product most familiar to you.

| Phase | Components | Key to consider |

|---|---|---|

| Collect | Fluent Bit, Collectd, Telegraf, Beats, rsyslog | System type. Framework already present. |

| Queue | Redis, MemCached, RabbitMQ | Routing customization. Performance. Delivery assurance. |

| Parse | Fluentd, Logstash, rsyslog, Graylog | Input / Output. Message parsing plugins. |

| Store | InfluxDB, Prometeus, Hadoop, Elasticsearch, RDD | Speed. Query language. Granularity. |

| Visualise | Kibana, Graylog, Chronograf, Grafana, Thruk, Cacti | Authentication / Authorization. Visualitation types. Query language / Transformation functions. Dashboard customization. |

| Act | Graylog, Kapacitor | Triggering capabilities. Query language / Transformation functions. Storage. Integration. |

Logging and using the enormous amount of data generated by the upcoming IoT infrastructure will be challenging for the whole IT infrastructure: for the network, for the CPUs, and for the software development. A lot of solutions are popping out, all with pros und contras. Depending on what are the primary goals of the project, one (mix) may be better as another.

*) This “joke” appeared in our XEN infrastructure just after an upgrade, and wasn’t funny. For more goto Kernel Line Tracing: Linux perf Rides the Rocket.

Our experts will get in contact with you!

Rocco Gagliardi

Rocco Gagliardi

Rocco Gagliardi

Rocco Gagliardi

Our experts will get in contact with you!