Security Testing

Tomaso Vasella

Every time I have a discussion with a customer about cloud deployment and cloud migration there are big concerns about cybersecurity issues. Although there is lesser discussion about the cybersecurity posture of cloud providers, it always comes down to a guts feeling that something hosted somewhere else may not be secure enough. Because let’s be clear on terms here: There is no cloud – It’s just someone else’s computer.

Still, when we’re talking about the major players out there (Microsoft, Amazon & Google), those computing infrastructures are better managed and secured than most of the environments I’ve seen in my 20+ years career as ICT security fellow. And this because they are indeed implementing cybersecurity risk frameworks as it should be. Why is it so, you may ask. Well, because without it they would be out of business soon, and as a key sell factor: Security matters. So you’ll find highly secured data centers where physical access breach is very unlikely and almost all security controls to get the right cybersecurity posture needed. At a cost, of course but this cost would mostly be much more competitive that anything done at your own. During those discussions with customers I found myself explaining that (unfortunately) security operation don’t scale very well…

Cybersecurity operations usually need a framework of process, procedure and tools to be well-implemented and operated by well-trained personnel. Those costs are almost the same if you’re a 100, 1’000 or 10’000 user company – Therefore the costs do not scale too well. Of course not everything should be deployed in the cloud but the discussion about security of cloud deployments is much more articulated and should be discussed leaving feelings apart and talking about requirements, benefits and costs of a solution and make sure to address following key points:

When it comes to cloud security requirements, encryption (and its keys management) plays a major role, but not all of the above points are of technical nature. Security is a process and you also need governance to ensure it. Still, even if you do apply to all those requirements there is something that cannot be easily secured in the cloud: The execution process and its related processing into RAM.

The problem here is that almost all content in memory during OS processing is in cleartext and easily accessible for unauthorized processes and/or personnel. Encryption of data-at-rest and during transport do not apply here. Considering the fact that a physical processor of a cloud stack would execute several virtual machines and considering that the RAM is also shared in the hardware enclosure, the possibility to read cleartext data without even compromising the VM is an obvious and present danger.

Let’s take an example to settle a scenario that clarifies the assumption above. I also said that not all scenarios would make sense to be deployed in the cloud considering the security requirements. Let’s take a PKI certificate authority. This is indeed a complicated scenario as it is already in the normal physical deployment a challenge to satisfy the security requirements. In such scenario the event of losing control over the root private/signing key would represent a catastrophic event. Because the private key needs to be present in cleartext in the VM memory (and as consequence in the RAM of the cloud hardware appliance) to allow key generation and signature processing, this cleartext data may be extracted without even compromising the VM or the entire customer virtual infrastructure.

In such scenario a fraudulent cloud administrator would be the major threat and the cleartext key in the hypervisor memory allocation would be the vulnerability that leads to the catastrophic event. As already explained, a well-functioning cybersecurity risk framework would help mitigating this risk using processes and procedures in lowering the probability of the threat (with security monitoring, separation of duty, etc.). Still, we would have to stick with the vulnerability because there is no technical solution to secure the process execution and that’s why we have specialized hardware requirements even for the physical deployments called HSM (Hardware Security Module) to ensure that the Keys are never held in the system RAM.

But wait, there is this thing called Software Guard Extension (SGX) implemented by Intel in the new Skylake processor that may just help out.

Intel announced in September 2013 SGX (Software Guard eXtension) technology to be implemented in their processors. It took some time to get it done for mass market, indeed it’s only available with the latest version of the processor Skylake.

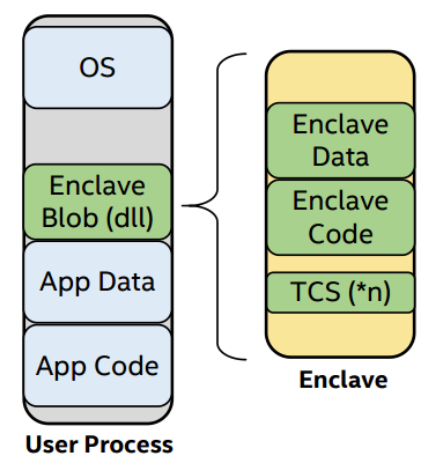

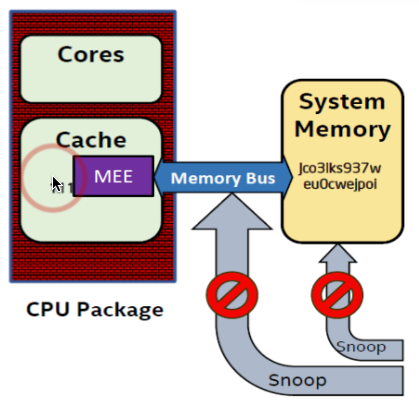

At its core, Skylake is a set of x86-64 CPU extensions that make it possible to set up protected execution environments called enclaves. The processor controls access to the enclave memory, instructions that attempt to read or write the memory of a running enclave from outside the enclave will fail. Enclave cache lines are encrypted and integrity protected before being written out to RAM.

Protecting sensitive data involves preventing data disclosure (confidentiality) as well as preventing data tampering (integrity), two important elements of an encryption solution. SGX protects data and sensitive code, it really provides the ability to encrypt data at rest and during execution when the application is running.

Therefore to protect sensitive data/code (like our PKI CA keys) you have to place it in enclaves which are encrypted and not visible outside of the enclave. As soon as the data leaves the VM, it’s encrypted. This prevents virtual infrastructure and storage administrators from accessing your data. By deploying SGX, we can prevent this from happening setting simple policies such as:

All Encryption Keys are stored in SGX enclaves (encrypted)

Thus if a snapshot of the raw hypervisor memory or of the entire VM is taken, the potential for sensitive data being written to disk in cleartext is eliminated. And as with all encryption solutions, if you hold the keys, you’re now completely in control of your data, which is truly safe from prying eyes.

The main difference between SGX and a standard architecture is that SGX’s threat model considers the system software to be untrusted. As explained earlier, this accurately captures the situation in remote computation scenarios, such as cloud computing. SGX’s threat model implies that the system software can be carrying out an attack on the software inside an enclave.

As a further example, a cloud service that performs image processing on confidential data (like medical images, customer data, etc.) could be implemented by having users uploading encrypted payloads. The users would send the encryption keys to software running inside an enclave. The enclave would contain the code for decrypting data and the code for encrypting the results. The code that receives the uploaded encrypted data and stores them would be left outside the enclave.

Is SGX fail-safe? Assuming Intel hasn’t built a backdoor in it, at this stage it looks pretty safe. Attacks on the software site (malware, reverse engineering, etc.) are not likely to be successful, as all relevant data will be encrypted and proofed regarding integrity by the processor.

Still, there are some possible attack scenarios on hardware/microcode level. Below is a list with a possible risk level metering for cloud computing:

| Issue | Description | Risk in Cloud |

|---|---|---|

| Chip Attacks | These attacks generally take advantage of equipment and techniques that were originally developed to diagnose design and manufacturing defects in chips. | very low |

| Power Analysis Attack | The attacker takes advantage of a known correlation between power consumption and the computed data, and learns some property of the data from the observed power consumption. | low |

| PCI Express Attacks | The PCIe bus allows any device connected to the bus to perform Direct Memory Access (DMA), reading from and writing to the computer’s DRAM without the involvement of a CPU core. | low |

| Attacks on the Boot Firmware and Intel ME | Virtually all motherboards store the firmware used to boot the computer in a flash memory chip that can be written by system software. An attack that compromises the system software can subvert the firmware update mechanism to inject malicious code into the firmware. | low |

| CPU Microcode Update | Reverse engineer of the CPU firmware to subvert SGX functionality. | very low |

Only time will tell if SGX will sustain all possible attacks and find broader acceptance. Still, the technology is very interesting and a step towards the right direction. And to confirm this trend, there are already some projects using or planning to leverage the use of SGX:

Intel SGX is an interesting technology that shows one path on how to deliver more security inside cloud computing. Today’s cloud platforms offer many advantages, but sometimes the risks of trusting the provider with full access to user data is a no-go for many deployment scenarios. To eliminate (or to accept) the risk, technology needs to implement shielded execution of unmodified server applications in a not trusted cloud host. SGX may bring us one step closer to a true utility computing model for the cloud, where the provider offers resources (computing, storage and networking) but has no unrestricted access to user data.

Our experts will get in contact with you!

Tomaso Vasella

Eric Maurer

Marius Elmiger

Eric Maurer

Our experts will get in contact with you!