Human and AI Art

Marisa Tschopp

What is human consciousness? Can machines be conscious? The new research area of machine consciousness wants to shed light on this enduring myth, integrating all these ethical and social implications that come along. Various scientific disciplines join the debate, from philosophy to physics, contributing valuable insights to the puzzling and fuzzy question, which societal status machines with intelligent systems have now and in future generations.

The neuroscientific perspective was in the center of the previous review of the human-machine comparison, focusing on the architecture of human mental processes and which parallels exist to synthetically reconstructed cognitive processes, and further who benefits from whom. The plea is clear: The approach must be scientific, to make professional and realistic conclusions. All forms of polemics, idealism and opportunism are counterproductive for a discourse about the mega topic AI.

When it comes to cognition, a scientific approach seems quite natural, as cognitive neuro sciences have gained great momentum and is now widely accepted in academia, due to a focus on empirical, quantitative methodologies. Research and development have made great progress reconstructing human neuronal structures, yet in the end one question remains: what brings life to this physiology? How does the nervous system manage to create consciousness through its functionalities? A very old, traditional myth of humanity, the classic mind-body problem, which keeps fascinating humanity for a long time now. The fire about the mind-body debate is rekindled, when it comes to the question, if consciousness can be attributed to machines, the more they resemble human architectures.

Scientist working in empirical research fear and prefer to avoid the topic of consciousness. “Real” scientists avoid setting off into the unknown fields of consciousness research, as one has to deal with questions, which cannot be answered quantitatively nowadays and answers that touch upon very unscientific issues. From Freud to God, and one has to deal with them in a way, which must be free of judgement.

Recently, consciousness research has found its way back into academia, for one reason being the tremendous developments in neuro- and computer sciences. There is a strong legitimation, however, machine or artificial consciousness research is still in its infancy. Yet media has covered heated debates about the legal status of machines, for example when looking at the abuse of machines, as seen in the following video by Boston Dynamics, which gave rise to sharp criticism by some robo-activists:

Serious consciousness research is a balancing act between humanities, theology and natural sciences, and has also found entry into psychology as an empirical science. Not long ago, the influence of cognitive processes on human behavior have been the big mystery, which has changed drastically. Concerning consciousness, mystery is still the name of the game. Clearly, no acceptable framework exists by now, only many ideas, for example the materialistically coined idea, that the brain creates consciousness as a form of illusion.

Zimbardo and Gerrig define consciousness as a state of recognizing inner states and the external environment (2008). Fundamental areas of research in psychology are:

Focus of this review are content and functions of consciousness, as the latter seem less relevant within the human machine context. Looking at the topic of content, a variety of complex constructs such as thoughts, feelings or perception at a specific point of time, are within the scope of research. Four different approaches are distinguished: preconscious memory content, unnoted information, and unconscious processes that influence the respective content of human awareness. Further unconscious processes without any active control, such as regulating the nervous system, are part of consciousness research.

The role of consciousness can be explained through the lens of the evolutionary perspective as in the theorem “survival of the fittest”. The underlying rationale is that consciousness was created by evolution to ensure the survival of the own species. Creating consciousness featured new skills such as restrictive reception of information, selective memory and planning function, also called executive control, with the objective to interrupt action and consider alternatives and consequences (Zimbardo and Gerrig, 2008).

Looking for physical explanations of consciousness, David Chalmers, Professor at the New York University, sees consciousness as some kind of anomaly in sciences: We experience nothing better than our own consciousness, yet there is no objective explanation for it.

In his book, The Conscious Mind: In Search of a Fundamental Theory, Chalmers not only works on a theory towards human consciousness, but also discusses the chances and conditions if and how machines can be conscious entities. Frankly, not easy to read, yet he tries to integrate philosophical as well as technical aspects into his reasoning to suit the needs of his heterogeneous readership. However, the topic of machine consciousness is hard to grasp at first, it affords quite intense examination from various perspectives.

Furthermore, using the example of Information Integration Theory of Consciousness (or Theory Phi according to Tononi, 2004), he explains that a system with a high degree of complex information processing analogously is conscious, which consequently also accounts for AI. However, this raises several questions: is there a collective consciousness? Does Canada have a consciousness? How do disruptive theories about consciousness look like? Radical, fundamental or universal? To answer these questions, frameworks of human consciousness must be created first. He is convinced, that humanity will have a definite model of consciousness at some point of time, but this requires open-minded researchers with radical ideas, courage, and maybe a hint of insanity, who are willing to engage in constructive controversy.

Graziano and Webb (2014) propose a rather mechanistic theory of consciousness (The Attention Schema Theory), based on studies in cognitive psychology and systems neuro sciences, published in the International Journal of Machine Consciousness. At the heart of the theory stands the processing of information and that consciousness is a tool constructed by the brain to process information successfully (attention as a method of data handling). The legitimization of the control function of attention as a central regulation mechanism, are described by the authors from an evolutionary perspective as well, as already explained above. At the end of the article, as with most articles discussing the topic of consciousness, it ends with the justified speculation, if these characteristics or properties can be transferred to machines.

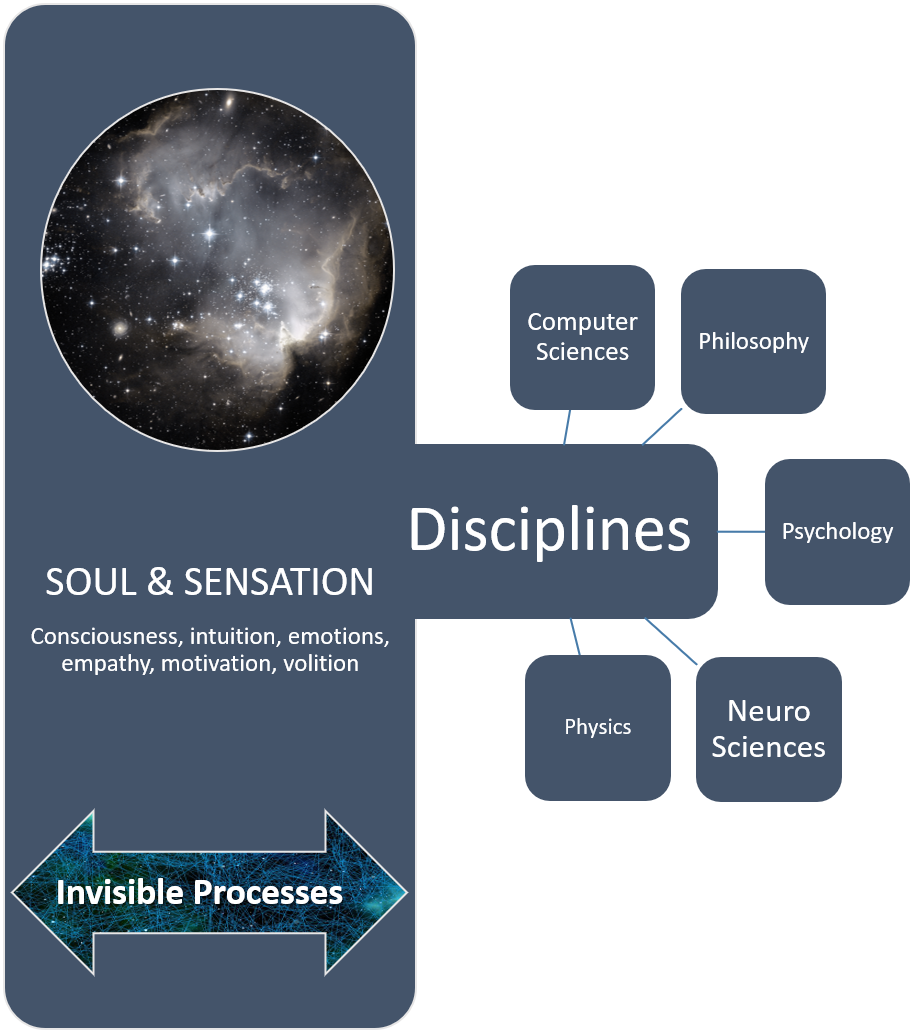

Scientific research on the topic Machine Consciousness (also called Artificial Consciousness or Digital Sentience), has approached the topic with diverse frameworks of multidisciplinary perspectives and wants to answer questions, comprising goal, sense and purpose of machine consciousness, as well as critique, social and ethical implications. This area of research is in a pre-paradigmatic stadium and hence in a state of exploration, where radical exclusion procedures are counterproductive (Gamez 2008).

Gamez differentiates four distinct areas of research on machine consciousness, which move along the continuum of human-machine-comparison: from reproduction/simulation of human behavior and architecture to the creation of real consciousness as a biological phenomenon:

If you look at the example of the well-known Chinese Room Thought Experiment by J. Searle, this would be attributed to the area (MC4) Phenomenally Conscious Machines. As a harsh opponent of conscious machines, Searle arguments, that a machine will never have a mind or consciousness, because “understanding” is just simulated. The logic of computers follows a pure formal structure (syntax), which orders symbols according to clear rules and hence only emulates understanding. In contrast to humans, with a mind and consciousness, who are able to attribute meaning and content to words and language (semantics).

The Turing Test on the other side (according to Alan Turing), which also tests, if a machine can think and act like a human, is satisfied with a mere simulation of human behavior, as long as these procedures are good enough to produce the correct results (for further explanation: this means, a Turing Test should merely test whether a machine is distinguishable from a human being, neglecting the role and methods of the actual inner processes). At this point an important overlap to other issues come into play, namely the distinction between weak AI and strong AI. A strong AI would correspond to the (MC4) area. Weak AI and the topic of Artificial General Intelligence, a term coined by Goertzel and Pennachin (2007), try to replicate human intelligence fully (MC1-3).

However, an entire replication, implies full understanding: _If we don’t understand how human consciousness is produced, then it makes little sense to attempt to make a robot phenomenally conscious. (Gamez. S. 892, 2008)

So, generally speaking, it would make sense to first understand the one thing before applying it to other complex systems. But history indicates the contrary: A progressive approach can be successful and radical ideas from different disciplines have led to huge insights which are beneficial across disciplines, as described in the last article regarding the Human Brain Project. Gamez keeps scientist on board with his fundamental argument that, there is no legit reason, why the physical structure of a computer is less likely to create consciousness, than the physical structures of the brain, keeping in mind the prerequisite that consciousness is a biological phenomenon and not a spiritual or an illusion (2007).

Neuroscientists will never fully understand how the brain works without understanding consciousness, and engineers will never build fully capable computers without designing them into some version of the same tool. (Graziano & Webb, S. 1, 2014)

So, what? That is the question all scientists should bear in mind. What are the benefits of researching consciousness, no matter if human or machine?

From a mechanistic perspective, all functions known by now which are attributed to consciousness, are evenly beneficial for human as well as for artificial intelligence. A scheme for regulating and simplifying information (instead of accurate aggregation) can be built in, for increasing efficiency in information processing and energy saving. Another function, which has not been discussed so far, is social cognition. In this context, social cognition means that humans attribute consciousness to other humans, which can be seen as an evidence for the existence of their own consciousness. This can be transferred to machines, so that the design of a machine incorporates the capability to predict behavior of others. This is especially important in social contexts, where intelligent machines interact with other human beings, for example as a soldier in a military unit or as a tour guide in a museum.

In conclusion it can make sense to pro-actively integrate machine consciousness in the design processes, to benefit from its functions:

The naïve approach of waiting to see if computers become conscious as they become more complicated has not yet yielded a satisfactory result. It may be more effective to design a machine in such a way that it concludes it has consciousness and can report that conclusion. The machine could use that self-model to regulate its own data flow and to understand the behavior of others. (Graziano and Webb, S. 11, 2014)

Or, do we simply have to accept, as Searle cynically states it, that machines have a consciousness just like humans know they have a consciousness, but cannot really prove it?

If so, how should a machine look like, if it is conscious? Aleksander and Dunmall (2003) define five prerequisites, which are needed to create a machine with consciousness:

Some examples, how machine consciousness is studied by using robots are, CRONOS; Cicerobot or Cyberchild, which integrate several aspects from the environment, physiology and internal processes.

Last, but not least: All researchers analyzing machine consciousness will be confronted with legal and ethical questions and imiplications sooner or later. This can happen in various scenarios, like the animal protection movement has confronted research and practice as a consequence of the acknowledgement of animal consciousness. The apocalyptic rise-of-the-robots discussions are as relevant as the issue of the legal status of a machine as a natural person or an object. The current issue about the responsibility within self-driving cars reflects the core of the ethical debate.

Whereas in classical systems developers are held responsible, boundaries are blurred when self-learning systems learn from the environment or if machines are attributed some kind of consciousness (Gamez 2008). One must not forget, that it is not long ago that in ancient Rome, slaves did not have a person status but were treated as objects (in Latin res) in front of the law. However, it seems quite unrealistic that a movement like #robotlivesmatter will gain serious importance. It is highly advisable though to integrate these topics in every stakeholder analysis, even if it sounds quite abstruse, because all of a sudden a small, unnoticed movement not taken seriously can have devastating consequences for a company or a state. For an in-depth discussion about the determination of the legal state of machines Calverley (2005) is recommended.

Machine consciousness is a new, complex and interdisciplinary research area, giving a huge headache to many. It is tightly interwoven with AI and although it would be easier trying to neglect this topic, it is an indispensable issue for everyone who wants to approach AI seriously and from a systemic perspective.

It has aroused interest from many disciplines, such as philosophy, psychology, neuro and computer sciences and even physics. With their distinct and unique approaches every discipline contributes to decipher the mystery of consciousness and demystify what is incomprehensible so far.

It is hard to predict how far, the research field of machine consciousness will establish itself in science, but certainly there is no rational reason to stop research endeavors within the next 10-20 years. This is quite reasonable because even if there are no developments in the area of machine consciousness, or it is proven that machine consciousness does not existent, it will definitely contribute to the knowledge and frameworks of human consciousness. This will hopefully lead to beneficial developments in the field of health as well as politics and jurisdiction. More knowledge about the benefits and functions of human consciousness, will lead to building better machines and systems, which in turn will serve humanity for our and future generations.

Aleksander, I., & Dunmall, B. (2003). Axioms and tests for the presence of minimal consciousness in agents. In O. Holland (Ed.), Machine consciousness. Exeter: Imprint Academic.

Calverley, D. J. (2005). Towards a method for determining the legal status of a conscious machine. In Chrisley, R., Clowes, R., & Torrance, S. (Eds.), Proceedings of the AISB05 symposium on next generation approaches to machine consciousness, Hatfield, UK.

Chalmers, D. J. (1996). The Conscious Mind: In Search of a Fundamental Theory. Oxford University Press.

Gamez, D. (2008). Progress in Machine Consciousness. Consiousness and Cognition, 17, 887-910

Goertzel, B., & Pennachin, C. (Eds.). (2007). Artificial general intelligence. Berlin: Springer

Graziano, M. S. A., and Webb, T. W. (2014). A mechanistic theory of consciousness. Int. J. Mach. Conscious. 06, 163.

Tononi, G. (2004). An Information Integration Theory of Consciousness. BMC Neurosci,5, 42.

Zimbardo, P., Gerrig, R., & Graf, R. (2008). Psychologie. München: Pearson Education.

Our experts will get in contact with you!

Marisa Tschopp

Marisa Tschopp

Marisa Tschopp

Marisa Tschopp

Our experts will get in contact with you!