Human and AI Art

Marisa Tschopp

This is how you produce a face with a voice

The Titanium Research Department has been monitoring developments in the field of digital assistants (often referred to as conversational AI) for several years. Various topics have already been considered, starting with the functioning, safety aspects and development potential of language assistants and the role of anthropomorphism, i.e. the humanization of machines. The core statement of the latter article is that only a conversation with a language assistant that is as natural as possible will lead to great success in terms of acceptance and benefit. We are still quite far away today from that, despite the impressive developments on the technological level, such as the performance of the restaurant reservation through the Google Duplex, which quite successfully pretended the restaurant to be a human being. Building on the initial work, embedded in a larger interdisciplinary research project, the A-IQ was developed, which is a kind of IQ test for voice-controlled digital assistants and is thus intended to serve as a playful way of exploring the competencies of voice assistants. The practical implementation of the gained knowledge was carried out in the development of an Alexa Skill for the VulDB (a vulnerability database which documents vulnerabilities, thus supporting administrators and security experts in their work).

With this approach of Research from Within we want to continuously build up a deep understanding of language as a control instrument of the future, both on a theoretical and practical level. Voice User Interfaces are considered to have great potential, but there are a number of open questions regarding the design of technology implementation in companies, which due to their complexity urgently require a transdisciplinary dialogue.

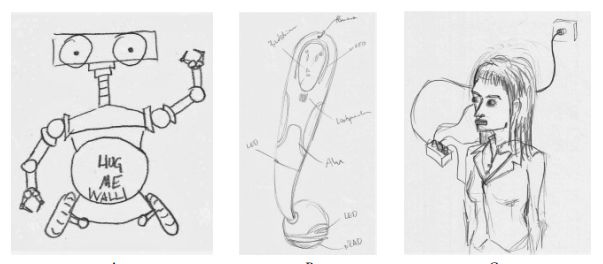

If VUI’s had a relationship statute, it would be: It’s complicated. There are no off-the-shelf applications that can be bought quickly, without creating further dependencies. The development and implementation needs commitment, time and money. Because how, for example, the voice interfaces are programmed, i.e. whether the digital assistant says Hey or Good afternoon, has a female, male or synthetic voice, makes humorous jokes or whether it should make recommendations on safety measures during the corona crisis, has an effect on perception. If a person hears a voice, an image is created in his head, that is inevitable. In an fascinating study at the JK University of Linz, researchers had test persons paint pictures of how they imagine the machine, depending on different voice sounds. For example, machines with a human voice, were more often painted with a nose, while mental representations of voices with synthetic/computer generated sound have more often been given wheels (Mara et al., 2020).

From the point of view of consumer psychology it is useful to understand how the different variables affect the perception of the individual user and what effect this has on acceptance and thus user behaviour. One of these variables is the so-called Brand Persona.

If we are in the context of Voice User Interfaces where no avatar or mascot is associated to the voice, such as Clippy, we are (in short) talking about a Brand Persona, which is a purely mental manifestation in the minds of the users. A bundle of impressions of a voice applications becomes a mental picture that is supposed to reflect the values or image of a company, the needs of the target group in combination with the task to be performed by the voice application. This is important to understand, because:

Invisible Interface is not Faceless (Study by Metadesign on Voice Branding)

Wally Brill, Head Of Conversation Design Advocacy & Education at Google, has been working for over 20 years on the question of how to give a voice application a character and experiments with the various variables that are supposed to shape the image in the minds of consumers, including for example:

The way of speaking or the jargon seems to be particularly important. It enables the programmers to influence the perception of the brand persona in a targeted way. The simplified example of how many possibilities there are to say ‘yes, shows how complex the process is to choose the right words. Does the assistant speak rather informally and humorously or, as in the example of SBB, rather formally and emotionlessly?

| Formal YES | Yes, Gladly, Ok, [confirmation in the form of paraphrases…] |

|---|---|

| Informal YES | Sure, Okidoki, mhm, yup, 👍 [emoji thumbs up….] |

Using the example of the brand persona, it quickly becomes clear: If an organization does not know its image, its values, its relationship to the user and its tasks, then it becomes difficult to choose the right ‘Yes’. What should the digital assistant look like to the customer? As a servant? As a boss? As a friend and helper? These questions have to be answered before a voice application goes into technical construction.

On a side note: With a voice project you can kill two (or more) birds with one stone. From an organizational psychological point of view, this preliminary exercise is worthwhile in itself, because in a participatory process, involving employees and managers, they work on these exciting questions of corporate culture and look for creative solutions to communicate their mission to the outside world. This can have a positive effect on the employees’ identification with the company, like a kind of team-building measure, which in the ideal case even brings economic benefits.

In this context, the difference between chatbots and voicebots will be discussed in addition. Voicebots are not simply voicified chatbots or FAQs and differ from chatbots in many ways, especially in the design of the conversations and the brand personas, like Karen Kaushansky, Conversational Designer at Google, preaches in many talks and reports. Text and voice work completely different, have different design options, e.g. a text-based chatbot can show more options while a pure voice application without screen should be limited to two or three answer options. For example, in our Alexa Skill for the VulDB, we have two or three spoken suggestions, while more information can be displayed on the screen of the Alexa Echo Show.

However, a spoken dialogue is based on a completely different pattern or design. A dialogue can run in many different directions. A text, on the other hand, is guided in a controlled manner and is often very logically structured. This does not mean that dialogues cannot be logical, but they appear in a completely different way. The decisive difference can be shown very well by comparing it with the chatbot model: In a chatbot, it is always possible to offer several options for the further course of the conversation via text output (The design of a voice user interface – anything but simple, by Farner Lab, 2020)

Above all else, however, it must be remembered that the design of the brand persona is not the first and most important step, as first the use case, the type of access (e.g. mobile phone, smart speaker?), and the multimodality (e.g. screen, no screen?) must be clarified:

Let’s go back to the Example SBB: What distinguishes the Google Action as a voice application from a text-based chatbot? For those who are already voice users, it is simply convenient and corresponds to their preferences. But can Silba also inspire new non #Voicefirst followers? Our conclusion is: Yes and No. Is this an ideal use case to produce a Google Action?

Surely on a playful level a certain personal touch was generated by the nice SBB sound. On the other hand, the normal Google Assistant already produces very good results when you ask for the next train to Zurich, for example. So there are still a few questions left unanswered regarding the SBB Google Action, even though the application certainly needs to be given a certain amount of kudos regarding their attention to detail. It remains to be seen whether Silba will prevail, the use case does not stand out very clearly in any case. It is one of the most important steps in the design process to identify exactly this Raw Chicken Moment. The raw chicken moment is the aha-moment, and refers to the characteristics of a situation in which both hands are not operational, making a voice application really useful.

In addition to technical and functional aspects from previous reports, this article focused on the topic of brand persona in voice user interface design. Brand personas are a part of the design process that shapes the image of the voice application, i.e. the perception of the consumer. Through its design elements, the brand persona influences the trust and sympathy of the consumers and thereby affects the user and adoption behavior. Before a brand persona is designed, the requirements must be clarified: (1) There is a good use case (raw chicken moment) and the necessary (2) infrastructure, data and resources for a voice application. A readiness check can help here, such as this Voice Readiness Check. What happens when the basis is right?

The development of the brand persona is perhaps not the most critical of all tasks in voice user interface design, but it is certainly one of the fun, creative tasks that can also be valuable on the level of corporate culture.

This article was written within the course Voice User Interface Strategy of the Lucerne University of Applied Sciences and Arts. Contents reflect the opinion of the author and are based on the lectures of Markus Maurer (Farner Lab), Karen Kaushansky and Wally Brill (Google Assistant Team) and Dr. Laura Dreessen (VUIagency).

Mara, M., Schreibelmayr, S., & Berger, F. (2020). Hearing a Nose? User Expectations of Robot Appearance Induced by Different Robot Voices. In HRI’20 Companion: ACM/IEEE Int. Conf. on Human-Robot Interaction, March 23-26, 2020, Cambridge, UK.

Our experts will get in contact with you!

Marisa Tschopp

Marisa Tschopp

Marisa Tschopp

Marisa Tschopp

Our experts will get in contact with you!